AI Week In Review 24.07.06

Kyutai's Moshi, InternLM 2.5 7B with 1M context, Lagent, Phi-3-mini update, Brave BYO model, Suno's iOS app, Dolphin Vision 72B, , ElevenLabs Voice Isolator, Perplexity pro-Search upgraded, GraphRAG.

Top Tools & Hacks

The release and the tool of the week is Moshi, from French AI startup Kyutai, who came out of nowhere with Moshi as their first release. Moshi is an end to end voice and text model, similar to GPT-4o voice mode. The difference is Moshi is a 7B parameter audio-to-audio LLM that is available to try right now.

The Kyutai demo showed off the “smooth, natural and expressive voice” and low latency, but YouTube AI reviewers like MattVidPro shared hilarious interaction fails as well. However, this was the work of a small team in 6 months of work. While Moshi is interesting but not yet useful, it will only get better.

KyutAI posted this with the announcement :

Developing Moshi required significant contributions to audio codecs, multimodal LLMs, multimodal instruction-tuning and much more. We believe the main impact of the project will be sharing all Moshi’s secrets with the upcoming paper and open-source of the model.

This would be a great contribution, to bring out open audio-to-audio AI models.

AI Tech and Product Releases

This was a big week for Open Source LLM releases.

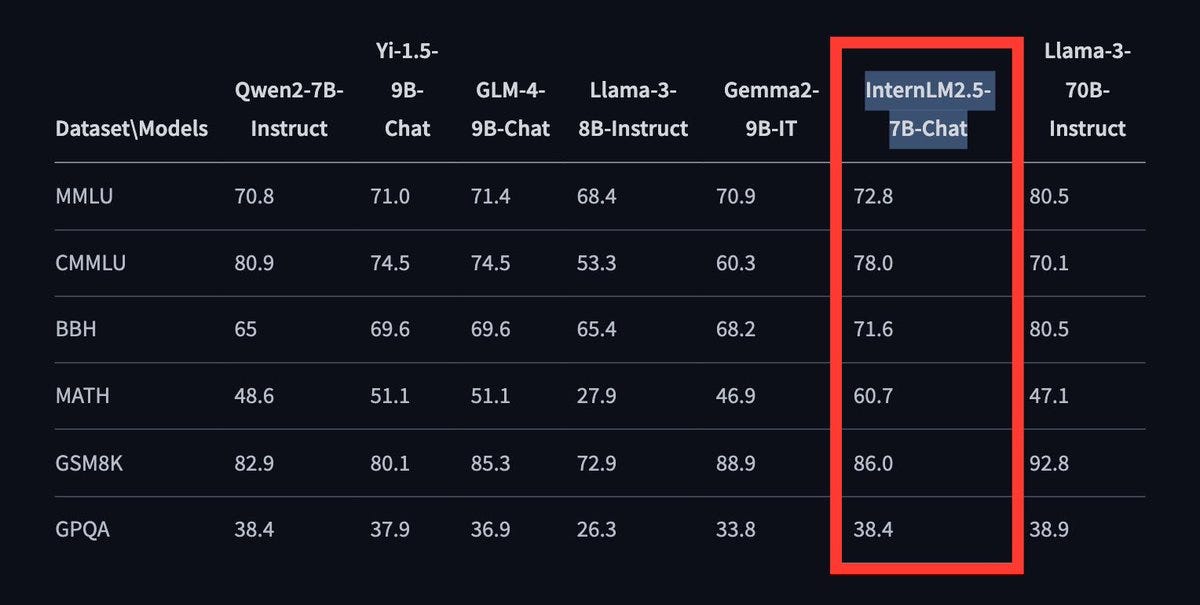

Shanghai AI has released InternLM 2.5 7B, a new state-of-the-art open-source 7B LLM with a whopping 1 million context window. It beats Llama 3 8B on literally every metric and is now the leading sub-10B model on the new HuggingFace leaderboard; it even beats much larger Llama-3 70B on MATH. InternLM 2.5 7B is available on HuggingFace and their project page is on Github.

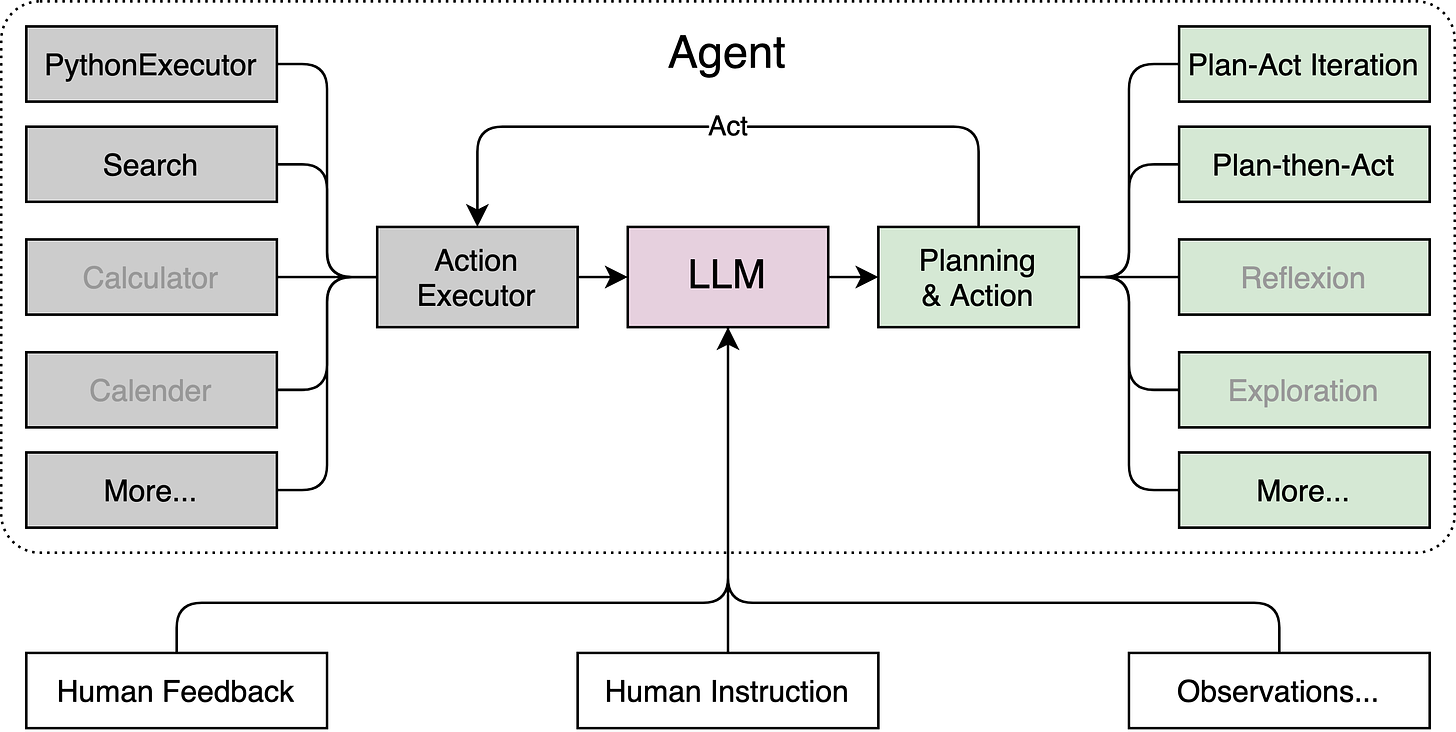

To support agentic AI and tool use for the LLM, they also released their own agentic framework called Lagent, a lightweight open-source framework that provides python Code Interpreter and execution, search, and ability to plug in tools; this allows users to efficiently build large language model(LLM)-based agents.

Microsoft Updated Phi-3-mini, and it got amazingly better on some tasks and benchmarks. Rohan Paul on X notes massive improvement on JSON structured outputs, from 1.9 to 60.1. For coding, a 33→93 jump in Java coding, 33→73 in Typescript, 27→ 85 in Python.

Meta has released their multi-token prediction LLMs on HuggingFace. In April, Meta published a paper on training better & faster LLMs using multi-token prediction. To enable further exploration by researchers, we’ve released pre-trained models for code completion using this approach.

Brave browser now lets you bring-your-own-model, so you can plug your choice of AI model, local or via API, into the Brave browser. You can install the model via Ollama and plug it into Leo. Brave says, “The local LLMs landscape has evolved so fast that it is now possible to run performant local models on-device in just a few simple steps.”

Eleven Labs’ just-announced Voice Isolator promises to extract crystal-clear speech from any audio. This new free tool helps podcasters or content creators get rid of unwanted background noise. They have a great demo here.

Last week, we mentioned neat new Figma AI features, like Make Design. But this week, Figma suspended AI design feature amid copying allegations. A user shared how he made an design of a simple "weather app" and he got something almost identical to the iOS weather app. The CEO of Figma acknowledged the problem and almost immediately paused the AI feature.

Perplexity has upgraded their pro search, to “tackle more complex queries, perform advanced math and programming computations, and deliver even more thoroughly researched answers.” This is available to Perplexity Pro users.

YouTube’s updated eraser tool removes copyrighted music without impacting other audio. This makes it easier for content creators to comply with copyright while sharing video from other sources.

Suno released a new App for iOS.

Two Qwen-2 fine-tunes were released this week:

Arcee Agent 7B, a Qwen2 fine-tune for function calling and tool use, from Arcee AI.

Dolphin Vision 72B, the largest open source VLM yet, based on Qwen 2 and from Eric Hartford of Cognitive Computations.

AI Research News

Microsoft has open sourced GraphRAG to Github. GraphRAG uses an LLM to automate the extraction of a rich knowledge graph from a collection of text documents, enabling retrieval augmented generation (RAG) on knowledge graphs.

The research behind this project was published in “From Local to Global: A Graph RAG Approach to Query-Focused Summarization.” They show GraphRAG yields superior results to naive RAG:

LLMs can successfully derive rich knowledge graphs from unstructured text inputs, and these graphs can support a new class of global queries for which (a) naive RAG cannot generate appropriate responses, and (b) hierarchical source text summarization is prohibitively expensive per query.

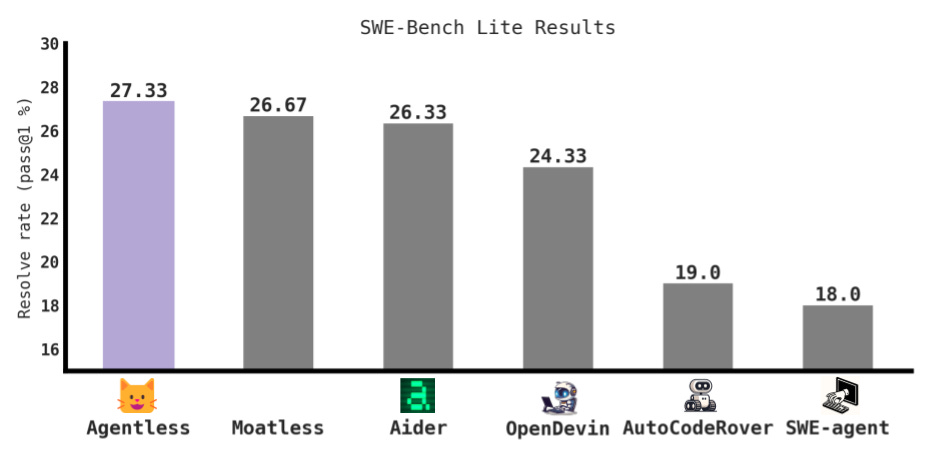

A new AI coding agent that is not an agent, called Agentless, gets SOTA on SWE Bench Lite: 27.33%. The paper “Agentless: Demystifying LLM-based Software Engineering Agents” explains their approach. This is open-source and the code to run this is on Github.

In addition, we covered these AI research papers in our Weekly AI Research Roundup:

InternLM-XComposer-2.5: A Large Vision Language Model with Long Context

RouteLLM: An Open-Source Framework for Cost-Effective LLM Routing

Summary of a Haystack: A Challenge to Long-Context LLMs and RAG Systems

APIGen: Pipeline for Generating Function-Calling Datasets

ESFT: Expert-Specialized Fine-Tuning for Mixture of Experts (MoE)

AI Business and Policy

Security issues plague OpenAI, with a security flaw found in ChatGPT macOS app, and news of a cybersecurity breach last year that OpenAI did not publicly disclose prior. The breach was fairly limited and done by an individual. However, the news raises concerns about OpenAI's broader cybersecurity practices, and it is a reminder that AI companies are treasure troves for hackers.

Apple is getting an OpenAI Board Observer as part of agreement with OpenAI. This arrangement is similar to what Microsoft has in their deal with OpenAI.

Instagram’s ‘Made with AI’ label swapped out for ‘AI info’ after photographers’ complaints. Some user got upset that using Photoshop to retouch photos triggered AI content notices that looked like they were using generative AI. It’s not the same thing:

The new label is supposed to more accurately represent that the content may simply be modified rather than making it seem like it is entirely AI-generated.

Quantum Rise grabs $15M seed for its AI-driven ‘Consulting 2.0’ startup.

AI Opinions and Articles

Tokens are a big reason today’s generative AI falls short:

“It’s kind of hard to get around the question of what exactly a ‘word’ should be for a language model, and even if we got human experts to agree on a perfect token vocabulary, models would probably still find it useful to ‘chunk’ things even further,” Sheridan Feucht, a PhD student studying large language model interpretability at Northeastern University, told TechCrunch. “My guess would be that there’s no such thing as a perfect tokenizer due to this kind of fuzziness.”

Former PM Tony Blair has advice to the new UK Government:

You’ve got to focus on this technology revolution. It’s not an afterthought. It’s the single biggest thing that’s happening in the world today, of a real world nature, that is going to change everything. …

This revolution is going to change everything about our society, our economy, the way we live, the way we interact with each-other. I think we are living through a period of massive change. This is the biggest technology change since the industrial revolution.

How do you use it to transform healthcare, education, the way Government functions? How do you help educate the private sector as to how they can embrace AI in order to improve productivity? This is a huge agenda.

From Ethan Mollick, an essay on how AI disruption proceeds, with small compounding changes, and how quickly it’s changing our understanding of what AI can do.

AI isn't good enough at a task, until it suddenly becomes good enough at a task. As long as AI ability keeps growing, we should be ready for rolling disruption across industries and tasks. - Ethan Mollick