AI Week in Review 25.10.25

DeepSeek-OCR, ChatGPT Atlas, Claude Code on web, Edge Copilot Actions, Vibe Code w Gemini, Unitree H2, Hunyuan World 1.1, PokeeResearch 7B, Krea Realtime 14B, LTX-2, AI Sheets, Director 2.0, Earth AI.

Top Tools

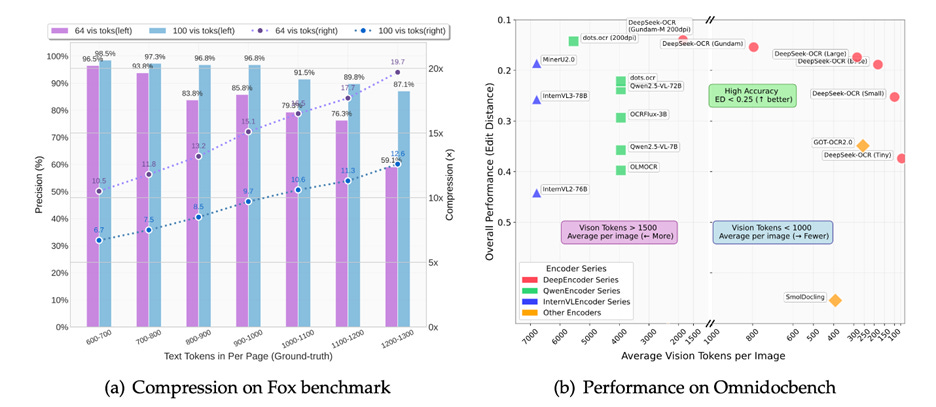

DeepSeek released DeepSeek-OCR, an open 3B parameter vision-language model (VLM) aimed at robust document understanding from images and PDFs. The release is available under an MIT-style license on HuggingFace, and DeepSeek also shared code and a technical paper, “DeepSeek-OCR: Contexts Optical Compression.”

DeepSeek-OCR is remarkable because this VLM pushes the boundaries of how much they were able to compress images yet still retain quality OCR results:

Experiments show that when the number of text tokens is within 10 times that of vision tokens (i.e., a compression ratio < 10×), the model can achieve decoding (OCR) precision of 97%. … This shows considerable promise for research areas such as historical long-context compression and memory forgetting mechanisms in LLMs.

Beyond its use as a very efficient SOTA OCR model, DeepSeek-OCR offers a new approach for long-context management by using vision-based text compression. With this, we can understand text from an image with 10x fewer tokens than reading the text directly.

AI Tech and Product Releases

OpenAI launched ChatGPT Atlas, a desktop AI browser for macOS that integrates browsing with ChatGPT features and optional “browser memories.” OpenAI promotes ChatGPT Atlas as “the browser with ChatGPT built in,” providing a first-party browser experience tied to ChatGPT accounts. Some reviewers have compared it favorably to competing AI browser Perplexity Comet, but it’s also gotten reviews suggesting it’s promising but not yet a reliable AI tool.

Anthropic introduced Claude Code on the web, a browser-based interface to run parallel coding tasks and connect to GitHub. The research preview supports isolated sandboxed environments, task steering, and automatic PRs. It is available to Pro and Max users. Anthropic outlined their security controls using sandboxing and shared links to docs for setup.

OpenAI introduced “company knowledge” to ChatGPT, a feature to centralize internal company information from internal docs, FAQs, and terminology so ChatGPT answers reflect an organization’s source of truth. The feature is designed to reduce custom retrieval plumbing and enable consistent, policy-compliant answers in enterprise workspaces. Corporate admins control the data governance and access for the system.

Microsoft expanded Edge’s AI browsing Copilot Mode, adding agentic Copilot Actions (e.g., unsubscribing from emails or booking reservations) and the Journeys feature that groups browsing history into thematic projects. The new tab chat, integrated search/navigation, and that Actions/Journeys are rolling out in preview. U.S. users can turn on Copilot Mode in Edge today.

Google launched an AI vibe-coding tool built on Gemini that allows users to create web applications by simply typing in their ideas. Users describe an app in the prompt, and the AI tool writes code for a complete application and deploys it in minutes. It’s available in AI Studio and uses Gemini 2.5 Pro, and it also offers features like viewing code, restoring checkpoints, and deploying apps.

Unitree has released the H2 Destiny Awakening humanoid robot. The 70kg humanoid robot has 31 degrees of freedom, a bionic head with facial features, and multiple dexterous-hand options. These robots are commercially available for sale.

Tencent has released the open-source Hunyuan World 1.1, a world model also called WorldMirror, that can reconstruct a 3D scene from text, image or video input in one quick step. The 1.1 version expands the input scope to include video (video-to-3D) and multiple images (multiview-to-3D world creation).

Liquid AI introduced LFM2-VL-3B, a lightweight multimodal (image-text) model designed for efficient edge and server deployment with tunable speed and quality and native 512×512 image handling. It has competitive performance among small open models and is available on Hugging Face.

Qwen updated its Qwen3-VL family with new/updated small and large checkpoints, including Qwen3-VL-2B-Instruct and Qwen3-VL-32B-Instruct (with FP8 variants).

AI2 (Allen Institute for AI) released olmOCR-2-7B-1025-FP8, a quantized OCR model fine-tuned from Qwen2.5-VL-7B for documents, math, tables, and scanned pages. The AI model is on Hugging Face.

Pokee AI announced PokeeResearch-7B, an open-source 7B “deep research” agent fine-tuned from Qwen2.5-7B-Instruct using RLAIF and a reasoning scaffold to decompose, verify, and synthesize across sources. Pokee AI claims SOTA results among 7B research agents. A preview page provides access, and the 7B deep research AI model is on Hugging Face.

Krea open-sourced Krea Realtime 14B, a real-time video model distilled from Wan 2.1 14B that streams long-form video generation at interactive speeds, with the first-frame generated in about 1 second. The technical blog on the release explains how the model uses self-forcing to make diffusion autoregressive, which enables realtime long videos. Krea realtime 14B is on Hugging Face.

Lightricks released LTX-2, a high-fidelity 4K-capable AI video engine integrated into the LTX suite, with synchronized audio/video generation and multiple performance modes. LTX-2 supports end-to-end creative workflows with storyboards, timeline, and casting consistency. Lightricks provides technical artifacts, documentation, and access to try LTX-2.

Hugging Face added vision to AI Sheets. This update to AI Sheets lets users extract and enrich image data using open models, extending the spreadsheet-like workflow beyond text. The release enables quick prototyping of vision tasks without bespoke Python pipelines.

BrowserBase announced Director 2.0, a free app powered by BrowserBase and Stagehand to automate web tasks. Director 2.0 is intended for agentic “computer use” tasks and deploys in the cloud via BrowserBase. Director is part of the BrowserBase AI automation stack alongside Stagehand, which is an AI-native browser automation framework compatible with Playwright.

Samsung partnered with Perplexity AI to launch a dedicated TV app for its 2025 smart TV lineup, enabling voice and text-based AI searches directly from the screen. The app allows users to query flights, recipes, or news without a phone.

Dropbox is expanding availability of Dash, an AI assistant and search engine that connects to all work apps for enhanced productivity. It offers plain language search, AI answers, and content organization, now accessible via a new app and integrated into Dropbox itself. Further improvements include multimodal capabilities through Mobius Labs and in-app search via an MCP server.

OpenAI’s Sora team teased “pet cameos,” indicating the video model can include a user’s pet in generated scenes. They also teased more social ways to use Sora, coming soon.

AI Research News

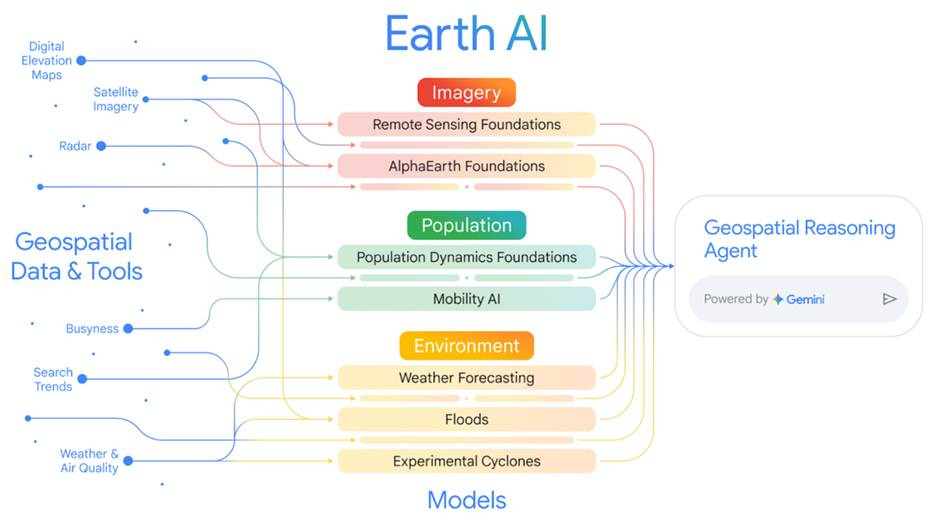

Google Research announced updates and expanded access to Google Earth AI, including the release of new foundation models for Imagery and Population. They also released foundation models plus a Gemini-powered geospatial reasoning agent that can chain satellite, population, and environment signals to answer complex, real-world questions (e.g., storm risk, vulnerable communities). Google Research reported state-of-the-art results on Earth observation tasks and shows improved predictions by fusing multiple model embeddings.

AI Business and Policy

Meta announced layoffs of 600 roles in its AI division as part of an ongoing reorganization, targeting positions in FAIR (Fundamental AI Research) in its superintelligence lab while ramping up hiring for AGI research. Meta’s chief AI officer Alexandr Wang cited a shift toward streamlined and agile teams focused on scalable models.

IBM and Groq announced a partnership for agentic AI at enterprise scale. IBM will expose GroqCloud inference via Watsonx Orchestrate, enabling low-latency agent workflows. Partnership plans include integrating Red Hat-backed vLLM with Groq’s LPU architecture and supporting IBM Granite models on GroqCloud.

General Motors announced the integration of Google’s Gemini AI assistant into its vehicles starting in 2026. The Gemini assistant, available via over-the-air updates for OnStar-equipped models, connects directly to vehicle systems for navigation and diagnostics, with GM aiming to evolve it into a fully custom GM AI interface for enhanced driver safety and convenience.

Stability AI partnered with Electronic Arts for game development tooling. Stability AI announced a collaboration with Electronic Arts to bring its AI image models and creative AI tools into EA’s game content workflows, continuing Stability AI’s push into enterprise-grade creative tooling.

Netflix declared an “all-in” commitment to generative AI in its Q3 earnings call, with CEO Ted Sarandos highlighting AI’s role in accelerating scriptwriting and VFX, projecting cost savings of 15-20% by 2026. Netflix sees AI as a competitive edge in a saturated market, positioning the company to leverage AI tools for content creation, personalization, and production efficiency.

The New York Times reports a backlash against AI data centers is growing globally, with power blackouts in Mexico and water shortages in Chile creating opposition to data centers that strain local grids and aquifers. Chile’s government is grappling with AI investment dilemmas, debating billions in subsidies for tech hubs to support economic development against public fury over resource depletion from data centers.

Meta formed a $27B joint venture with Blue Owl Capital to fund their Hyperion AI data center. Meta created a JV with funds managed by Blue Owl Capital to develop the Hyperion data center campus in Louisiana, with Blue Owl owning 80% and Meta retaining 20%. This deal underscores the massive amounts of capital needed to build out AI infrastructure.

The UK’s AI Security Institute posted an interim “International Scientific Report on the Safety of Advanced AI,” co-authored by 74 AI experts from around the world and intended to inform multilateral policy discussions at upcoming summits. The report summarizes current understanding of general-purpose AI and how to manage its risks.

AI Opinions and Articles

Andrei Karpathy, in a recent Dwarkesh Patel interview, created a stir with comments about AI timelines and progress. Karpathy stated it will take about a decade to get AI agents to be fully realized as the equivalent of a human employee, and he suggested AI agents lack sufficient intelligence, multimodality, computer usage abilities, and memory to be really useful yet.

Some reacted that he was debunking AI optimists, but he clarified in an X post he’s no AI skeptic:

My AI timelines are about 5-10X pessimistic w.r.t. what you’ll find in your neighborhood SF AI house party or on your twitter timeline, but still quite optimistic w.r.t. a rising tide of AI deniers and skeptics.

He is clear he believes AI progress will continue, but the path to AGI and superintelligence will be a grinding continuation of computing and automation progress so far, not a singularity:

“ I see it as like a progression of automation in society...I feel like there will be a gradual automation of a lot of things and super intelligence will be sort of like the extrapolation of that.”

His reasoning on AI progress, learned from bitter lessons trying to get self-driving cars to work, is that each next step in AI improvement will be significantly harder than the previous one:

“It’s a march of nines and every single nine is a constant amount of work so when you get a demo and something works 90% of the time that’s just...the first nine and then you need the second nine and third nine fourth nine fifth nine.”