AI for Coding – AI Coding Assistants

Best AI Coding Assistants: Cursor, Codeium, Replit Agent, Zed AI, Aider, Continue, Copilot.

AI for Coding – Crawl, Walk, Run

As we described in our previous article “AI for Coding – Models,” the advance of AI has led to a rapidly improving ecosystem for AI coding. AI models can understand and generate code better than ever. AI coding assistants and agents are building on these foundation AI models to make the software development process more productive than ever.

Using a “crawl, walk, run” analogy, you might say that AI has recently moved up from crawling to the walking phase. Early AI coding completion assistants based on prior AI models were able to do simple code generation tasks such as inline code completion, helpful but limited in scope.

Now, AI assistants are like a junior programming colleague: They can design an overall complex program, break down the development into tasks, setup the program structure, craft boilerplate code, generate code for different specific functions, test and check code, and correct identified bugs. They can do all these things, but not perfectly, and can get stuck in many cases.

Using these capabilities, some tech-savvy people have generated hype with AI tools coding complete applications quickly. Tweets and videos of “AI coded my app in 5 minutes” and “non-coder builds an application” have gone viral on social media. AI can code up a proof-of-concept application well, but building a robust, production-deployable, complex application requires much more human direction and interaction.

Given current AI capabilities, the best mode of operation is human-in-the-loop. AI coding assistants can help with the whole software development process, but AI struggles as code gets more complex. AI is imperfect; it can make mistakes and generate bugs. Reliability is still the Achilles heel of generative AI.

Another motivation for human-in-the-loop interaction is the need to interact and iterate to specify intent precisely or try out different software ideas. In AI-driven prompt-based incremental, the user first asks AI to code a simple version of an application, then iterates on features and functions to get to a desired complete application.

AI coding assistants serve human-in-the-loop mode of operation well, acting as a “co-pilot” in the software development process.

The Best AI Code Assistants

There are many AI coding assistants available and new ones are getting released frequently, such as Replit’s recently launched Replit Agent and AI code editor Melty from a new startup.

The current top AI coding assistants, based on usage, features and user feedback, includes these proprietary AI coding assistants: GitHub Copilot, Cursor, Codeium, Replit Agent. It also includes these open-source AI coding assistants: Zed AI, Aider, Continue.

Before we dive into these, a few other AI coding assistants worthy of mention:

Amazon Q (formerly CodeWhisperer) is Amazon's entry into the AI coding assistant market and fits well into the AWS development flow, helping with coding and deployment.

Cody by Sourcegraph is one of the first Copilot alternatives developed. It offers many standard co-pilot features, including autocomplete, chat, knowing your codebase, etc. with good performance.

Double is an emerging AI coding assistant “engineered for performance” built by a startup. They have a VSCode extension that you can try for free.

Copilot

GitHub Copilot is the original and the most widely used AI coding assistant, with good basic features, strong integration with popular IDEs, and an understanding of workspace context. Copilot has been superseded by other AI coding assistants on some features, but it keeps adding useful features of its own, like Workspace.

Cursor

Cursor is a standalone code editor, build as a fork of VSCode modified to work with Cursor’s integrated AI capabilities. As such, it is an AI-first code editor.

Cursor boasts a number of important features: Code completion, both single and multi-line; predicting next edits; codebase repository indexing for code search, code understanding, and grounded AI code generation; chatting about code, including with image and with web access; apply code generated in chat into the editor codebase; ability to switch between different AI models.

Cursor’s best and most powerful feature is its Composer feature, where AI will generate or edit code across multiple files and functions. Cursor generates these complex changes, then integrates code changes based on user acceptance with one click, making edits of complex code bases more efficient.

Zed AI

Zed AI is based on the high-performance open-source code editor Zed, now augmented with AI-enabled coding support. Since Zed AI is a built-for-AI code editor, it can be considered an open-source alternative to Cursor that enjoys many of the same features.

Zed AI is unique in being open source yet having a hosted service for its AI integration, powered by Claude 3.5 Sonnet. They have features for code completion, generation and understanding. They allow manual context building in their prompt with files and text, a more direct and consistent way of interacting with the AI. They also have external knowledge via “/perplexity” command.

Aider

The open source Aider AI coding assistant bills itself as “AI Pair programming in your terminal.”

Aider is the Linux of AI coding assistants. Unlike other AI coding assistants, Aider is a command-line application that provides a bash-like shell to invoke AI coding commands. Aider commands include mapping your codebase, editing multiple files at once, and via “/ask” command, and asking for any code changes desired. Aider leverages git commands and git commits for better context understanding.

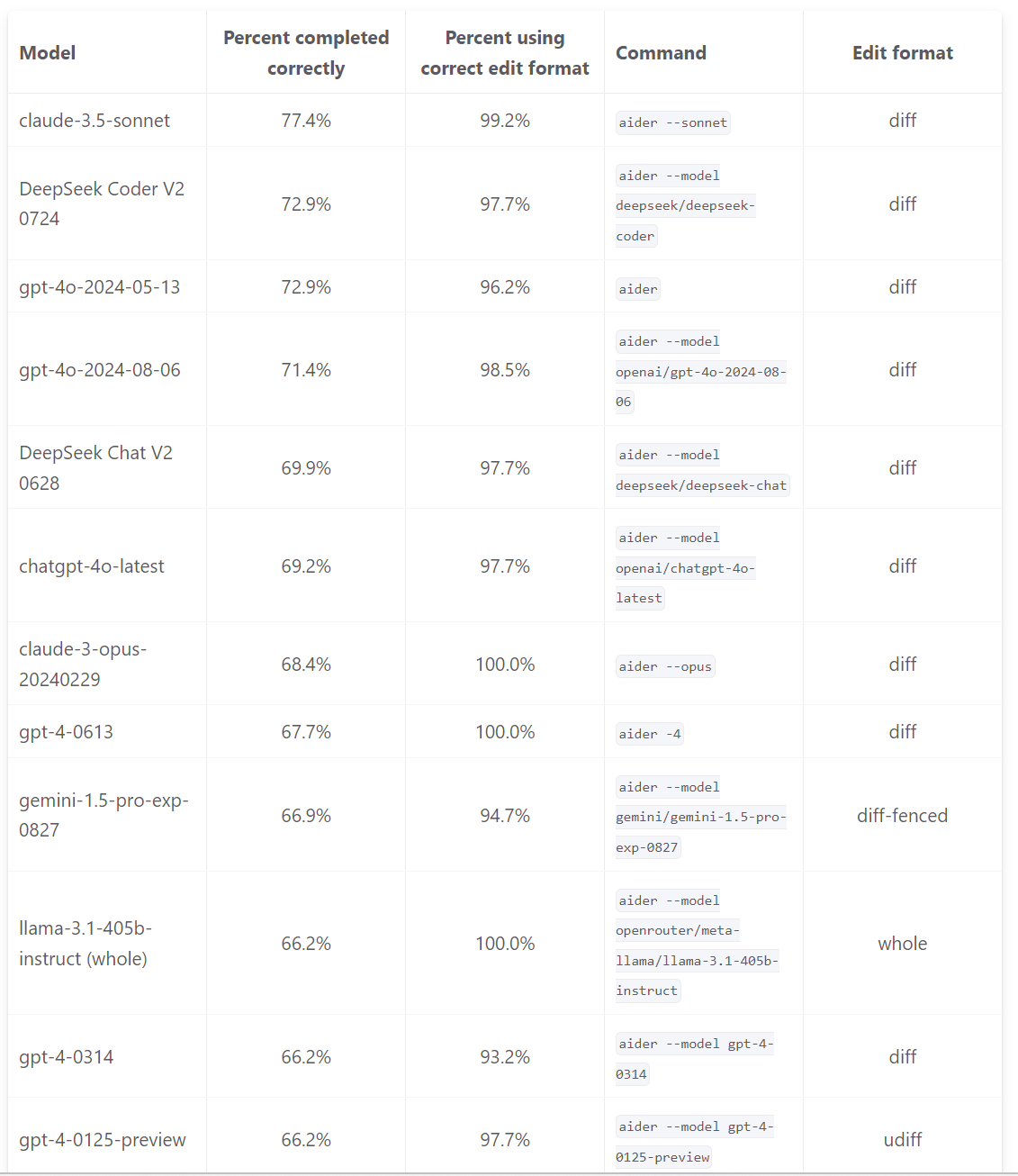

Aider’s Swiss army knife features are powerful, but one challenge is integrating with a code editor. One solution is to run Aider within the terminal of the VSCode editor. You can pair Aider with a wide variety of AI models. The best AI models to run with Aider (and with other AI coding assistants) is listed in the Aider LLM leaderboard. The top-scoring AI model is Claude 3.5 Sonnet.

Aider can produce SOTA results on software development tasks, and it has won a devoted following on the strength of its features.

Codeium

Codeium is a strong contender in AI coding assistants. Codeium has AI code completion, search, and chat capabilities, and supports over 70 programming languages. Codeium is an extension that integrates with over 40 editors.

Unlike offerings that are wrappers around AI models, Codeium has built their own AI models for some functions, such as auto complete. Their AI model strategy is explained here; they combine in-house, fine-tuned, and off-the-shelf AI models, depending on the use case.

While Codeium is not free for enterprise users, they have a generous free tier for individuals.

Replit Agent

Replit is a coding environment for collaboratively building and deploying code. Replit’s initial entry in the AI coding assistant space was Ghostwriter, a Copilot-like AI tool with auto-complete and basic AI code generation. Then last week, Replit shook things up with Replit Agent.

Replit Agent is quite impressive. As with other AI Copilots, it can understand and chat about your code (Explain Code), auto-complete (Complete Code), generate code, and edit your code. Replit Agent can also install libraries and dependencies, run a virtual server, write up content, make plans and validate them with you before execution, and integrates with the rest of Replit’s features.

Replit says the AI model used was “tuned by Replit.” Being integrated into the already easy-to-use Replit environment makes it a productivity multiplier, especially for those already using Replit. Replit Core, which gives access to Replit’s AI features, is $25/month, so not cheap, although they have a (very limited) free tier.

Continue

Continue bills itself as the leading open-source AI coding assistant. It is an extension to VSCode or JetBrains, and comes packed with many features, such as tab autocomplete, chat on code, highlight-to-rewrite code, and code indexing.

Continue has an advantage as a very flexible AI coding assistant:

You can connect it to any AI model accessible via an API, including local AI models through Ollama.

The Continue code indexing feature allows granular control over the relevant files to include in contexts.

Customized prompt files make it easy to write, share and invoke custom prompts.

The flexibility and open-source pedigree of Continue means it can be set up to run entirely on a local machine by connecting it to local open-source LLMs, ensuring privacy and control over sensitive code. This makes it a flexible, customizable, and privacy-conscious option.

AI Code Assistant Capabilities

As they improve, AI coding assistants are converging to adopt similar improved capabilities. These key features include code completion as well as generating, editing, and explaining code:

Code Generation: The primary AI coding assistant purpose is to generate code. This can include:

Inline code completion. The AI will automatically complete a line of code as a suggestion.

Generating code from user instructions in the chat interface. This can be a simple function or a complex request for a full application.

Code Editing:

Inline Edit gets the AI changes displayed in differential style, all within your editor.

Code Review and Merge reviews and apply changes to your code in the code editor. An AI coding assistant integrated with the coding environment can streamline this task.

Code Explanation:

Chat about code. Users can chat with an AI model via a chat window to ask coding questions.

To explain code, you need to understand it, so a key feature is building the codebase knowledge base by indexing code. The indexed code is used in a RAG system or put into the context during queries.

Integration: A good AI coding assistant requires good integration with the user’s development environment or code editor. Integration approaches can vary:

Built as a standalone application (Aider).

Built as an extension of an existing code editor (Continue, Copilot, Codeium).

A customized code editor could have AI built-in (Zed AI, or Cursor, which forked VSCode).

Comparisons and Conclusions

I had intended to develop a rigorous feature comparison chart, similar to the April 2024 AI Agent Rubrik developed by Techfrens (5 months old, so out-of-date). However, all the AI coding assistants we reviewed have the above-listed code generation, code editing, and code explanation features.

It is not a matter of what features these AI coding assistants have, but how well do they do them? Judge these AI coding assistants on user experience.

On this score, Cursor leads as a best-in-class AI-first software development environment that dramatically accelerates programming. Cursor has been winning people on vibes and great examples of coding up new apps quickly.

The new Replit Agent has similarly garnered a lot of attention. If you already use Replit’s environment, moving up to their AI agent capabilities is a no-brainer.

As AI coding assistants depend on AI models for their capabilities, the quality of the result depends on the quality of the underlying AI model. While some AI assistants (like Codeium) use customized AI coding models, most leverage frontier AI foundation models. The best AI coding setup comes from pairing a good coding assistant with the best AI model, which currently is Claude 3.5 Sonnet.

AI coding assistants vary in capabilities, but also are rapidly improving and converging. The best choice of AI coding assistant depends on your specific needs. It is best for now to try multiple tools and find out what meets your needs.

To that end, developers should try out the open-source AI coding assistants Zed AI, Aider and Continue. Aider, Continue, and Zed AI all can work with Claude 3.5 Sonnet, have good features, and are free to try, so there’s little downside to trying these tools out. Codeium has a free tier that is also attractive.

Developers may benefit from combining AI tools, leveraging the strengths of each for different tasks. You can combine Aider in VSCode terminal with Continue as a VSCode extension. You can take V0 from Vercel, an AI tool for UI development, and combine it with Cursor to build a full-stack application.

As AI code assistants take on more agentic capabilities like multi-file code editing the line between AI coding assistant and AI agent starts to blur. As AI coding assistants improve on complex tasks, we will move from “walk” to “run,” with much of the software development process automated or at least greatly accelerated.

Great breakdown of the current AI coding landscape, Patrick. I've been deep in this space and your observation about "junior programmer-like collaborators" really resonates. What I've found is that the gap between tools isn't just features - it's how they handle context and maintain coherence across a real project.

I've been building an AI agent system using Claude Code for the past few months, and the human-in-the-loop dynamic you describe is spot on. But I'd add a nuance: the quality of that loop matters enormously. With some tools, you're constantly course-correcting. With others, you're more like a senior dev doing code review - which is a much more productive collaboration pattern.

The reliability issues you mention are real, but I've noticed they correlate heavily with how you structure the interaction. Giving AI full context about your codebase architecture, keeping focused scope, and treating it as a conversation rather than a command-line - these patterns dramatically reduce the "hallucination and unreliability" problems.

One thing missing from most comparisons is how these tools perform on sustained, multi-file projects versus one-off tasks. The demo experience and the daily-driver experience can be wildly different. I actually wrote up my findings after weeks of real-world testing with Claude Code specifically: https://thoughts.jock.pl/p/claude-code-review-real-testing-vs-zapier-make-2026

Would love to hear what your readers are finding works best for larger codebases - that seems to be where the tools really diverge in usefulness.