Building Seemingly Conscious AI

Seemingly Conscious AI is coming, but not as soon as some might think, as critical AI capabilities are missing. AGI will come before.

The Meaning of Seemingly Conscious AI

AI progress has been phenomenal. A few years ago, talk of conscious AI would have seemed crazy. Today it feels increasingly urgent. - Mustafa Suleyman

The recent thought-provoking essay “We must build AI for people; not to be a person” by Mustafa Suleyman, which we mentioned in our latest AI Weekly, has caused quite a stir.

There’s been a variety of reactions. Some have criticized his perspective, either as self-interested or as an attack on AI alignment and AI safety research. Despite suspicion of his motives as head of AI at Microsoft, there is also widespread acknowledgement that many of his points are on target.

Many people, including myself, agree with his point that treating AI as human simply because it can imitate some human behavior and reasoning can lead us to wrong conclusions.

I spoke of the Anthropomorphic Error in 2023, saying:

AI as it works now and in the near future is both powerful and different enough from human thought that the analogy is no longer useful in helping us be precise and correct about what AI technology is and how it works.

Suleyman is coming from a similar perspective, explaining that treating AI as truly conscious mis-represents what AI technology really is. AI doesn’t need to be treated as living or conscious; AI has no feelings; deep down, it’s just math and machine.

Yet Suleyman is right that AI is behaving ever more like a life-like, relatable, human in its behavior. He calls this Seemingly Conscious AI, defining it this way:

“Seemingly Conscious AI” (SCAI), one that has all the hallmarks of other conscious beings and thus appears to be conscious. … one that simulates all the characteristics of consciousness but internally it is blank. My imagined AI system would not actually be conscious, but it would imitate consciousness in such a convincing way that it would be indistinguishable from a claim that you or I might make to one another about our own consciousness.

Seemingly Conscious AI passes a kind of consciousness Turing test, able to fool us as apparent conscious beings. Viewed from this perspective, Seemingly Conscious AI (SCAI) is a level of AI capability, similar to AGI or Superintelligence.

Milestones to Seemingly Conscious AI

Suleyman’s essay poses questions around how we should handle AI if and when we can achieve Seemingly Conscious AI, but here we’ll consider some preliminary questions:

What AI capabilities are required to achieve SCAI?

When will AI be able to pass the Turing test equivalent for SCAI?

Regarding the ‘when SCAI’ question, Suleyman says:

I think it’s possible to build a Seemingly Conscious AI (SCAI) in the next few years.

Such an aggressive timeline is possible with rapidly improving AI, but the challenges of Seemingly Conscious AI are significant and complex. The challenge of truly emulating human consciousness is more significant than AGI, i.e., reaching human-level performance on a broad range of discrete tasks.

To really replicate the illusion of consciousness (let alone actual consciousness) requires not just reasoning, memory, and multi-modal perception to be able to perform tasks like humans, but also a level of expressed self-awareness and internal motivation that goes far beyond what AI can do today.

As a result, we will achieve AGI before SCAI.

Our assumption is that you cannot fake consciousness without some level of self-awareness. It’s possible some people may believe their companion AI without real self-awareness are conscious, but this would be subjective experience or self-deception, while others recognize the AI as not conscious. A benchmark of Seeming Conscious abilities might help make this more objective.

In my view, SCAI is not imminent, and Suleyman is right to argue that the study of AI welfare is premature and possibly dangerous. Leaving aside specific AI timelines, AI is developing along this path:

Building the Seemingly Conscious AI Brain

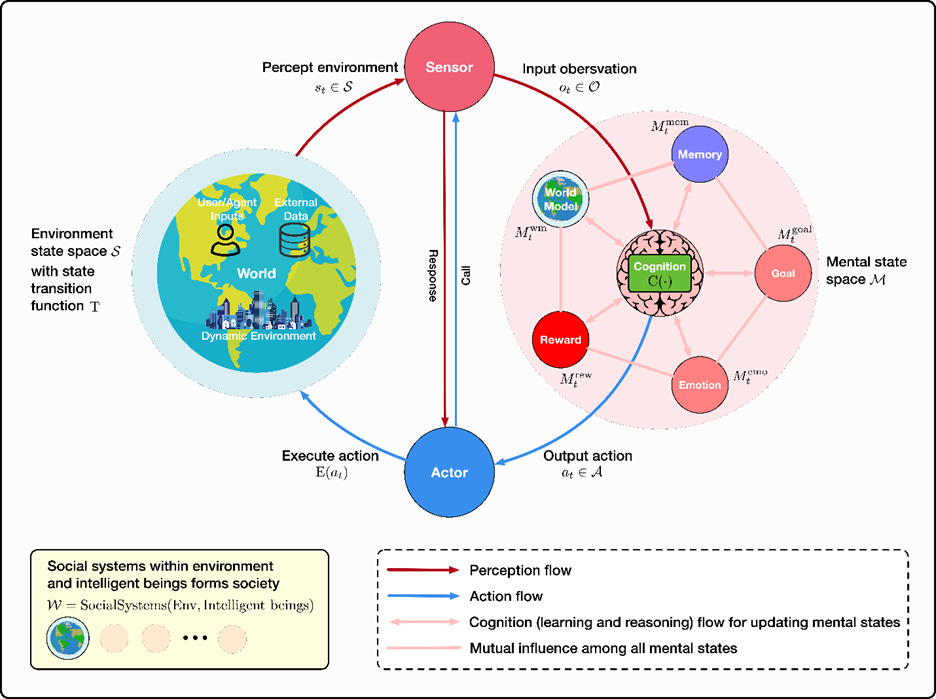

In terms of consciousness and emotional experience, LLM agents lack genuine subjective states and self-awareness inherent to human cognition. Although fully replicating human-like consciousness in AI may not be necessary or even desirable, appreciating the profound role emotions and subjective experiences play in human reasoning, motivation, ethical judgments, and social interactions can guide research toward creating AI that is more aligned, trustworthy, and socially beneficial.

To justify this claim that achieving SCAI is a difficult challenge that requires some complex capabilities beyond what’s needed for AGI, we turn to a survey of AI agents based on “framing intelligent agents within modular, brain-inspired architectures.”

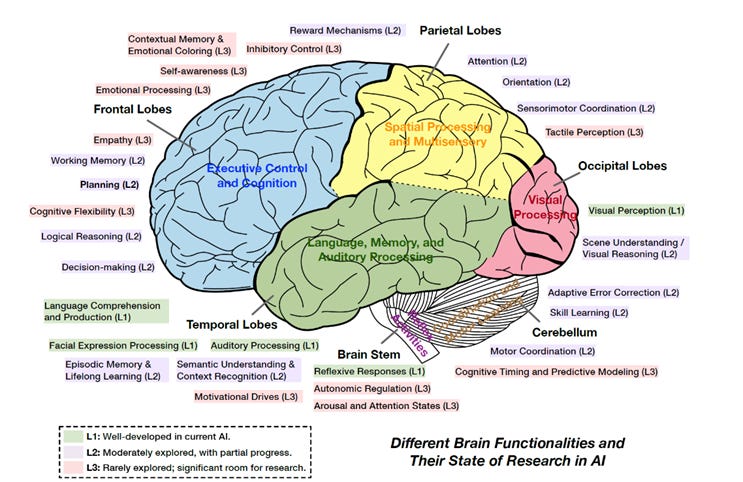

“Advances and Challenges in Foundation Agents: From Brain-Inspired Intelligence to Evolutionary, Collaborative, and Safe Systems” is a 396 page scholarly work published on Arxiv in May. They structure their survey of AI agents by mapping AI agent reasoning, perception and operational capabilities onto analogous human brain functions, and evaluating the status and progress in each area.

Viewing it through the lens of AI that replicates human thought processes, they observe both areas of progress and gaps in AI capabilities relative to the human mind. This makes it an enlightening guide to understanding needed AI capabilities and AI progress for developing Seemingly Conscious AI.

They illustrate the outline of their study with a figure, shared below, mapping the human brain and its components to analogous AI capabilities.

Capabilities tied to consciousness, such as self-awareness, emotional processing, and motivational drives, are among the least developed in AI. For example, they note that:

… social-emotional functions involving the frontal lobe (like empathy, theory of mind, self-reflection) are still rudimentary in AI. Humans rely on frontal cortex (especially medial and orbitofrontal regions) interacting with limbic structures to navigate social situations and emotions. … AI agents do not yet possess genuine empathy or self-awareness – these functions remain L3 (largely absent).

These higher-level cognitive capabilities are essential to further agentic AI progress:

Logical reasoning and decision-making with reasoning engines and causal reasoning frameworks.

Planning with long-horizon reasoning and hierarchical planners.

Memory, including episodic and working memory and knowledge retrieval.

Cognitive flexibility with dynamic model selection and multi-modal and adaptive reasoning.

These functions that drive higher AI performance relate to similar functions found in the “Executive Control and Cognition” part of our human brain, i.e., the frontal cortex.

To get AI to achieve AGI, there needs to be significant improvement in reasoning, planning, memory, and adaptive behavior. However, consciousness-related functions of self-awareness, emotional control, empathy, and motivational drive are less important to making AI agents work.

An AI agent with strong memory, tool use, reasoning and planning skills but lacking self-awareness and empathy could still be extremely useful for many tasks. I don’t need my AI coding agent to have human empathy or be self-aware, I just need it to write excellent code automatically.

Would AGI-level AI agents exhibit seemingly conscious traits anyway? With better memory, in particular episodic memory, comes the ability of AI to remember prior interactions and personalize experiences, which will feel to some like conscious AI. Moreover, the autonomous nature of AI agents in environments is similar to how organisms operate in a natural environment; it might seem alive. Endowed with reason, memory, and language as well, it could well be ‘seemingly conscious.’

However, without a serious effort to instill human conscious traits of self-awareness and sense of identity, it will be just a static reflection of its system instructions. A discerning AI user will not get fooled.

The Cost of Misunderstanding SCAI

The urge to imbue consciousness to AI is innate to humans but leads us astray.

Suleyman rightly argues that the study of AI welfare is premature and possibly dangerous, as it treats AI as conscious when it is not. Pursuing AI research that misrepresents AI’s consciousness risks wasting resources on falsely premised challenges. As we constantly evolve our understanding of AI, this may not be a serious issue, just a possible research dead-end.

The real costs and risks go beyond research dead-ends into a culture of AI misunderstanding. If a misperception of AI agents as motivated conscious automatons, like many SciFi movies (Bladerunner, Terminator, Ex Machina) have primed into our cultural beliefs, then it raises both fear and awe of AI, neither of which is warranted. This will lead to excessively cautious policies (“AI will kill us all”) and wasted resources being overly solicitous (“AI welfare”) to the needs of AI machines.

Conclusion - Patience

Seemingly Conscious AI is not as imminent as some believe. One can argue that even AI chatbot that pass the Turing test are “seemingly conscious,” since they can fool someone into thinking they are human within an interaction. But we can tell the difference between consciousness and chatbot behavior, and if AI agents reset their memory after a task or interaction, their identity is lost.

One can argue that even AI chatbot that pass the Turing test are “seemingly conscious,” since they can fool someone into thinking they are human within an interaction. But we can tell the difference between consciousness and chatbot behavior, and if AI agents reset their memory after a task or interaction, their identity is lost.

Creating Seemingly Conscious AI is a difficult challenge and is not needed for effective AI agents. The focus of AI progress is currently on improving reasoning, memory and tool-use, and developing ever more advanced AI agents and swarms (see “The Four Dimensions of AI Scaling.”) This will take us to AGI but is not enough for Seemingly Conscious AI.

Super-intelligent AI agent systems will have super-human capabilities in tool use (accessing hundreds of tools), memory (perfect recall across an internet worth of information), and reasoning (solving large complex problems beyond human understanding).

This super-human AI could end up vastly different from our expectations: A super-intelligent hive-mind swarm of massively parallel AI agents - Alien Intelligence - instead of a Humanoid AI residing in a single AI model. This AI would be different and alien enough that it might possess self-awareness yet never be confused with human consciousness.

Seemingly Conscious AI is a useful paradigm for AI, but we may never want or need to achieve it as a capability, as it doesn’t encompass the core capabilities needed for incredibly useful AI agents. Rather, if it does arise, it will be as an artifact of high-level reasoning, memory, or other features. It’s an unwanted guest in an AI agent system.

If even seemingly conscious AI is a big hill to climb, actual AI consciousness is much further out. Whether AI consciousness is a contradiction, an impossibility, or just an extremely difficult challenge (which means we’ll solve it in 10 years), it’s too early to tell.

It's becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman's Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990's and 2000's. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I've encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there's lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar's lab at UC Irvine, possibly. Dr. Edelman's roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow

This is amazing work. Would you be interesting in guest posting this?